Independent research from MIT. Structured risk taxonomies, expert surveys, governance mapping, incident tracking, and regulatory compliance, all in one place.

Publicly reported AI incidents have more than tripled annually since 2020, across domains from healthcare to finance to criminal justice.

Only 15% of the risks experts rate as critical severity are substantively addressed in corporate AI governance documents.

128 new AI-specific laws, regulations, and binding guidelines were enacted globally in 2025, up from 37 in 2022.

Only ~14% of the world's largest AI companies mention catastrophic or existential risk in any public governance document.

2,000+ specific mitigations extracted from 70+ documents, organized into 4 categories and 23 subcategories.

Featured in

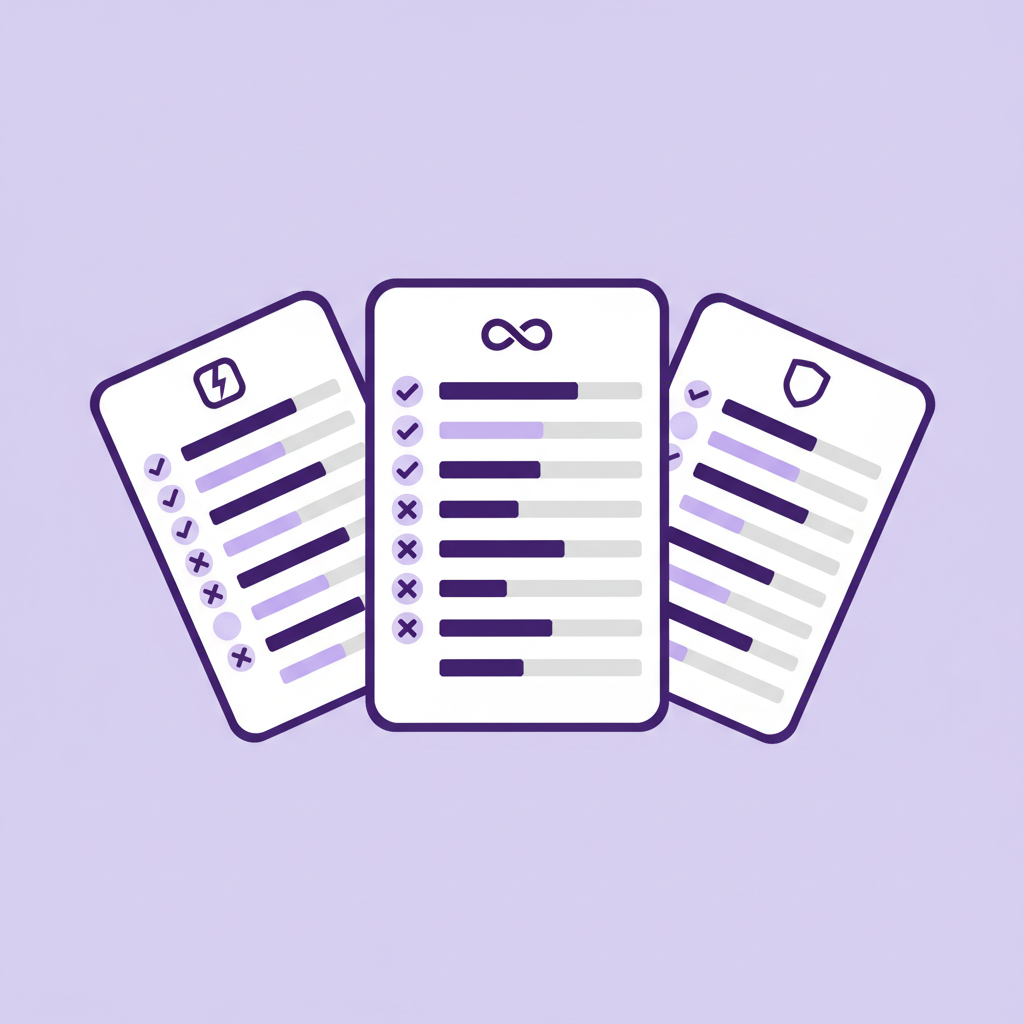

How ~200 major organizations respond to AI risks based on public documentation. Uses our risk and mitigation taxonomies to map coverage gaps across sectors.

Three-round Delphi study with 272 international AI experts. 14 of 24 risk domains rated 10%+ catastrophic probability under business as usual.

780+ frameworks and 1,725+ classified risks across 24 domains, organized into a causal taxonomy.

2,000+ mitigations from 70+ documents organized into a structured taxonomy.

Live database tracking reported AI incidents across sectors, linked to our risk taxonomy.

In partnership with CSET, tracking global AI governance policies, laws, and frameworks.

Testimonial

"A valuable database for accessing current and comprehensive information on a wide range of AI-related risks. This resource helps fill a critical information gap."

Yoshua Bengio

Turing Award winner, Mila

Testimonial

"A monumental body of work and a critical piece of the AI Governance puzzle. If you want to understand AI risk, this is your one-stop shop."

Geoffrey M. Schaefer

VP, AI Strategy and Governance, Leidos

Testimonial

"A valuable taxonomy helping the policy community have more grounded discussions. It supports a more empirical approach in a field characterized by significant uncertainty."

Madhu Srikumar

Head of AI Safety Governance, Partnership on AI

Testimonial

"A key resource for organizations grappling with the realities of deploying AI. Its practical, evidence-based approach offers immediate benefits across the AI value chain."

Kevin Fumai

Asst. General Counsel, Oracle

Get the latest updates on our research and weekly commentary on AI risk and governance developments.

SubscribeMapping AI Risk Mitigations: Evidence Scan and Draft Mitigation Taxonomy

Mapping the AI Governance Landscape: Pilot Test and Update

Repository Update: December 2025 (Version 4)

AI Risk Repository Report updated (April 2025)

Used by governments, industry, and academia across the globe.