What are the risks from AI?

This week we spotlight the 31st framework of risks from AI included in the AI Risk Repository: Electronic Privacy Information Center (2023). Generating harms: Generative AI’s impact and paths forward. https://epic.org/documents/generating-harms-generative-ais-impact-paths-forward/

Paper focus

This paper provides an outline of problems that could be caused by the rapid adoption of generative AI without adequate safeguards. It has been prepared by the Electronic Privacy Information Center (EPIC), a non-profit research and advocacy center for protecting privacy, freedom of expression, and democratic values.

Included risk categories

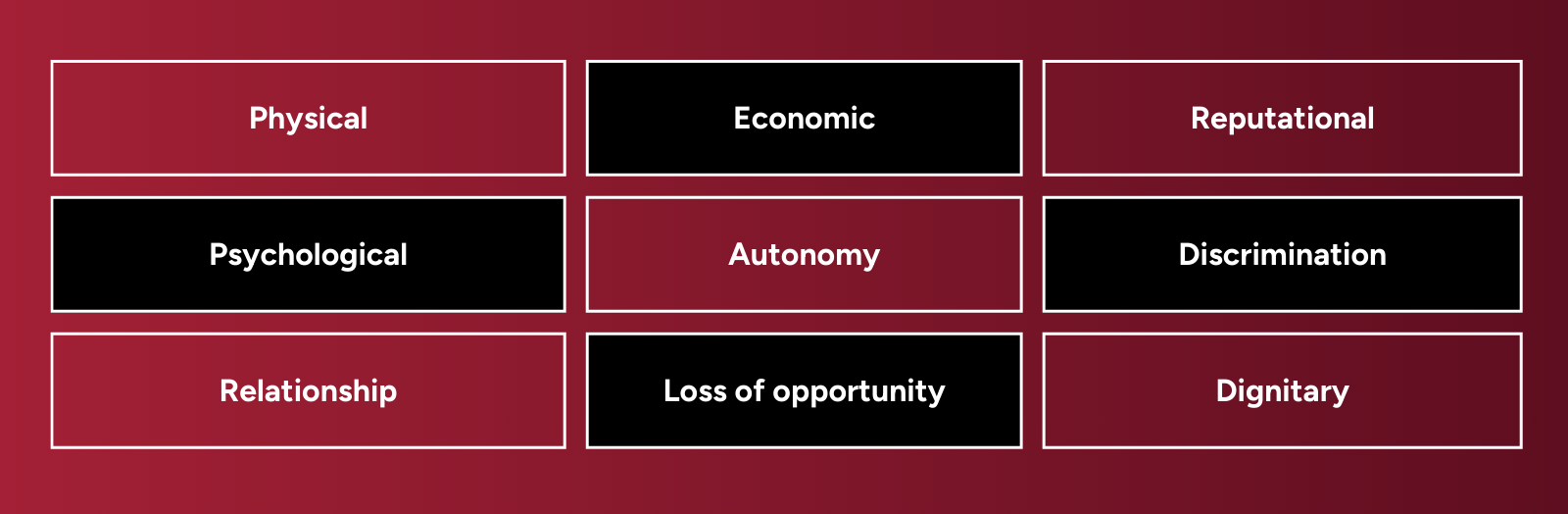

This paper draws on major taxonomies of AI harms to present an overview of 9 common harm categories caused by generative AI:

These are accompanied by real-world examples of harms caused by generative AI, including suicide, impersonation, deepfakes, defamation, sexualization, threats of physical harm, misinformation, copyright infringement, labor disputes, and data breaches.

Key features of the framework and associated paper

⚠️Disclaimer: This summary highlights a paper included in the MIT AI Risk Repository. We did not author the paper and credit goes to the Electronic Privacy Information Center (EPIC). For the full details, please refer to the original publication: https://epic.org/documents/generating-harms-generative-ais-impact-paths-forward/

Further engagement

→ View all the frameworks included in the AI Risk Repository