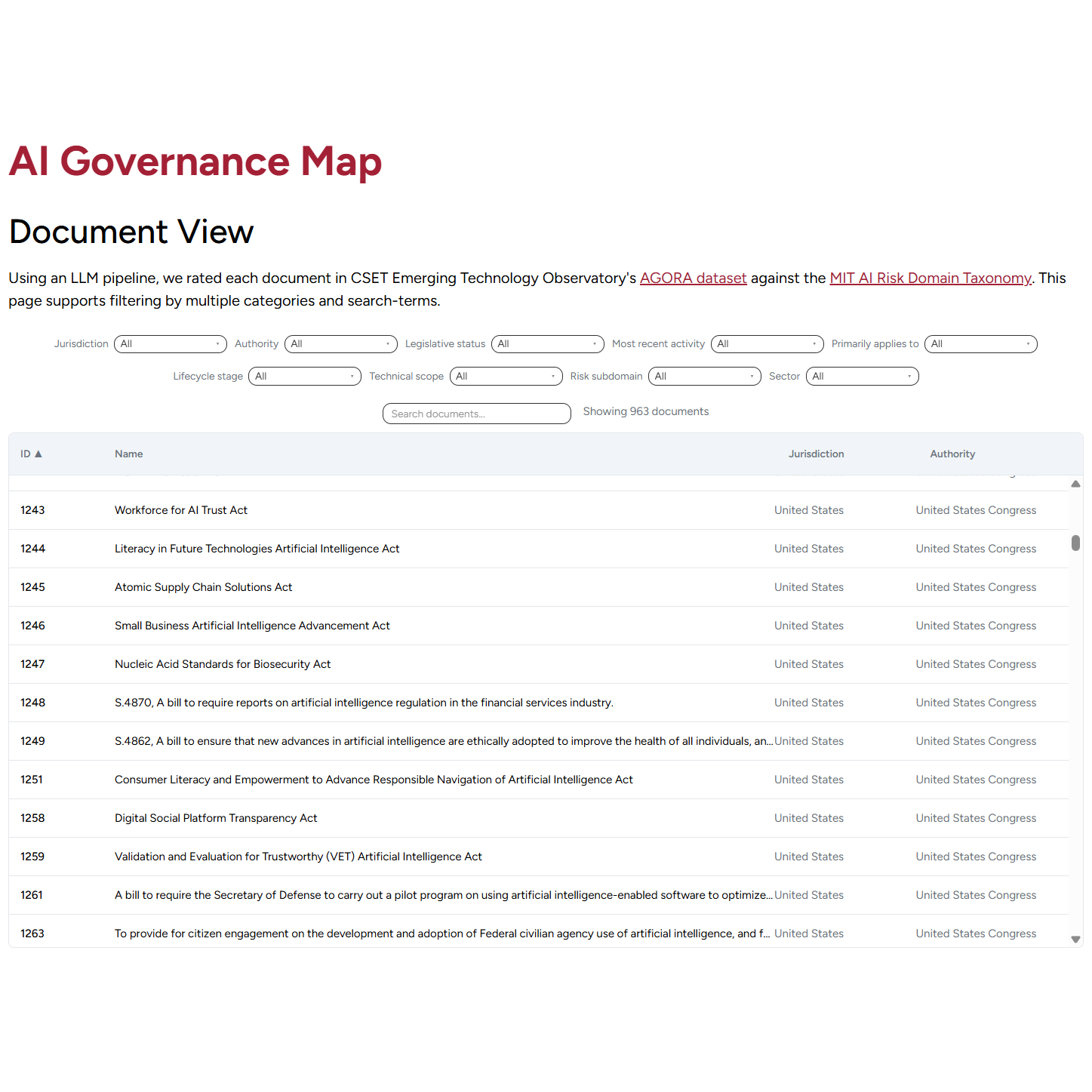

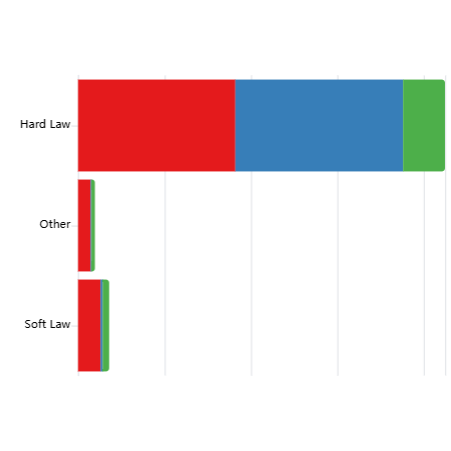

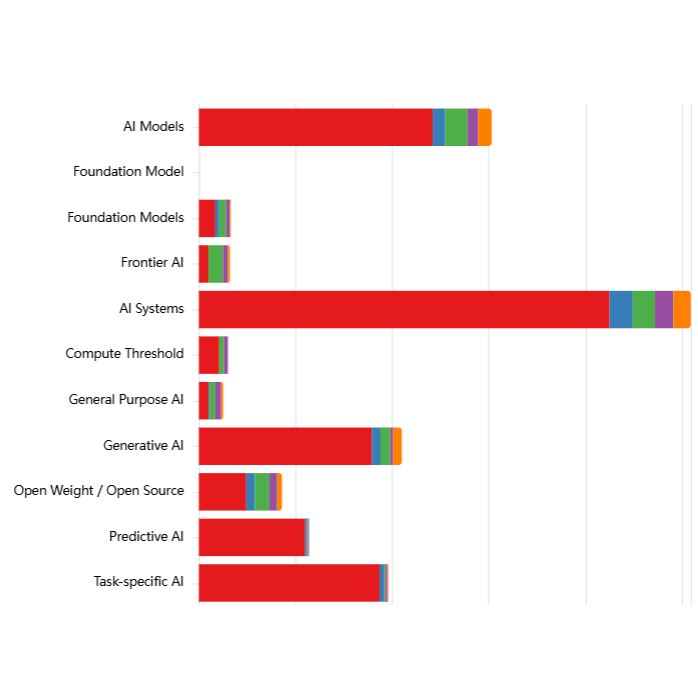

Using an LLM pipeline, we assessed each document in CSET Emerging Technology Observatory's AGORA dataset to identify which types of entities fulfil different roles in the governance process: Proposers (who draft governance instruments), Targets (who must comply), Enforcers (who oversee compliance), and Monitors (who track effectiveness).

Insights from Actor Analysis:

- Comparable responsibility: AI developers and deployers receive nearly identical levels of coverage as risk targets based on observations from our data.

- Government perspective. Given that most documents in the dataset are government-proposed, this pattern reflects how governments are currently conceptualizing the allocation of liability and responsibility within the AI value chain. However, this may not necessarily reflect how responsibility is distributed or should be distributed in practice to account for where risks emerge and how they can be effectively mitigated.

Explore the chart using the dropdown filters, and by clicking on category names (Jurisdiction, Authority, Legislative Status etc) to see distributions within each category or click on the preset example configurations below the chart.

Interactive Chart

[ { "label": "Actors targeted by documents, broken down by Jurisdiction", "filters": { "actorRole": "targetTypes", "stackBy": "jurisdiction" } }, { "label": "Actors proposing documents, broken down by Authority", "filters": { "actorRole": "proposerTypes", "stackBy": "authority" } }, { "label": "Actors enforcing compliance with documents, broken down by Most recent activity", "filters": { "actorRole": "enforcerTypes", "stackBy": "activity" } }, { "label": "Actors monitoring compliance with documents, primarily applying to Private Sector, broken down by Legislative Status", "filters": { "actorRole": "monitorTypes", "appliesTo": ["Private sector"], "stackBy": "legislativeStatus" } }

]

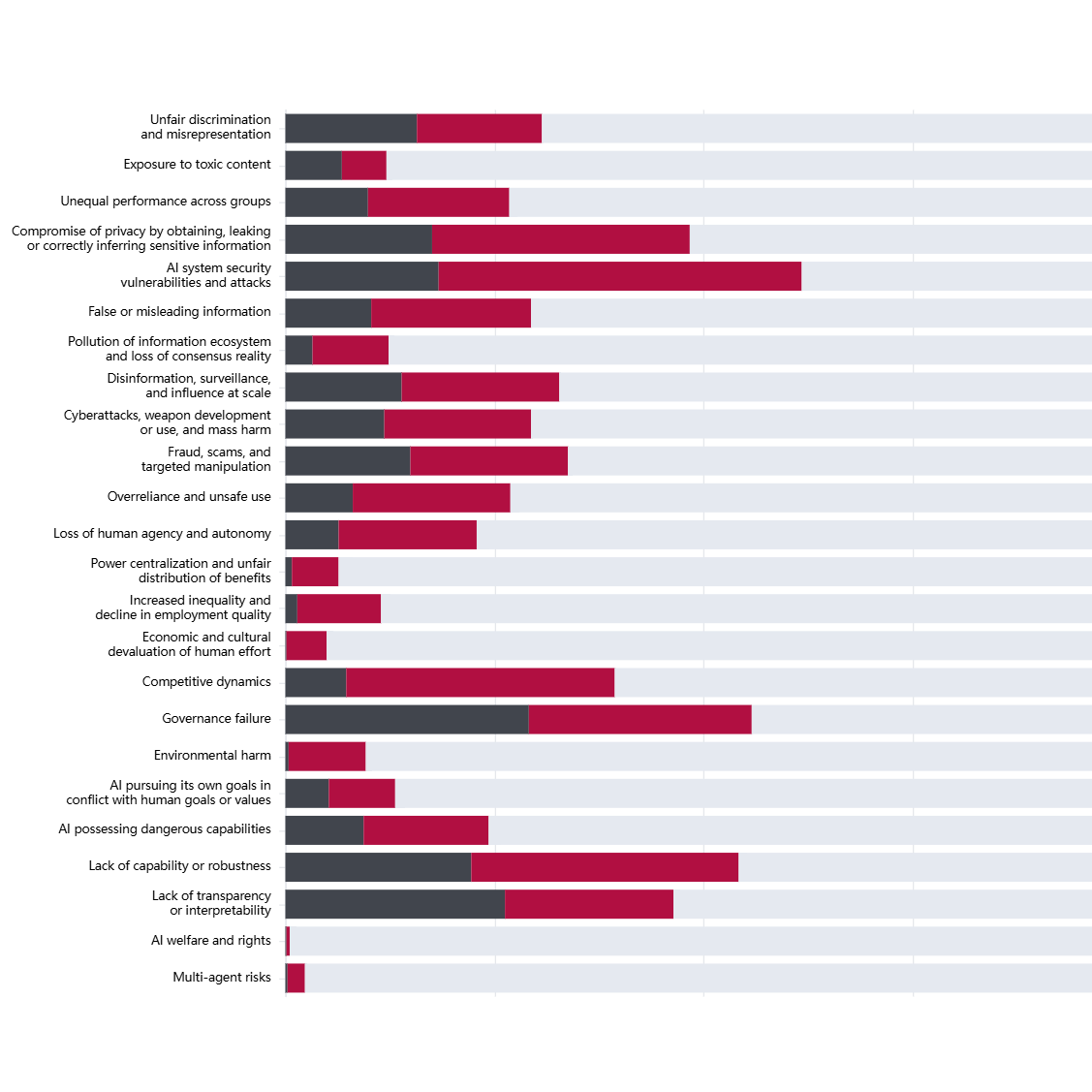

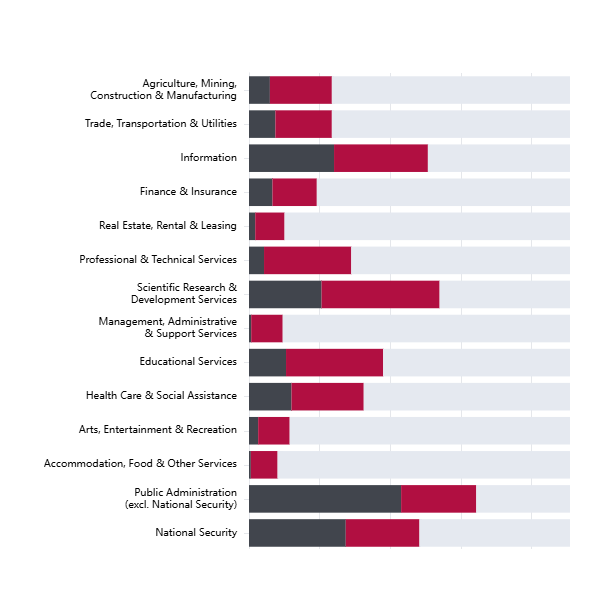

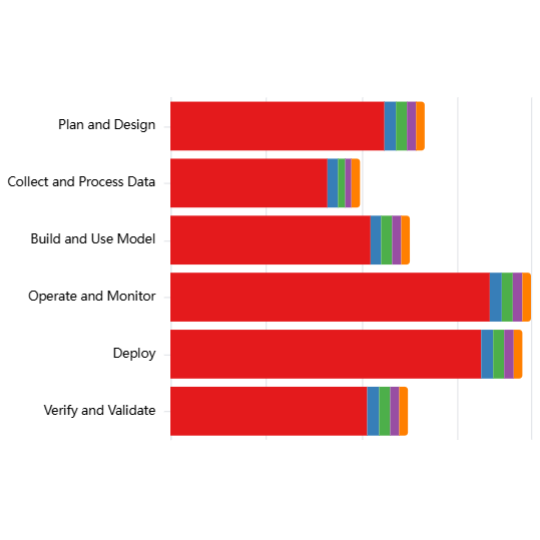

Important context for interpreting these results:

The LLM classifications are based on the approximately 1000 documents in the AGORA dataset, which is predominantly composed of U.S.-origin English language government proposed documents, the majority of which are federal-level.

Coverage patterns described therefore reflect the priorities and framing conventions of this particular corpus and should not be taken as representative of the global AI governance landscape. We also found LLM classifications to exhibit some biases including over-attribution of coverage when governance-related language is present. Coverage scores should be taken as indicative of broad patterns rather than precise measurements.