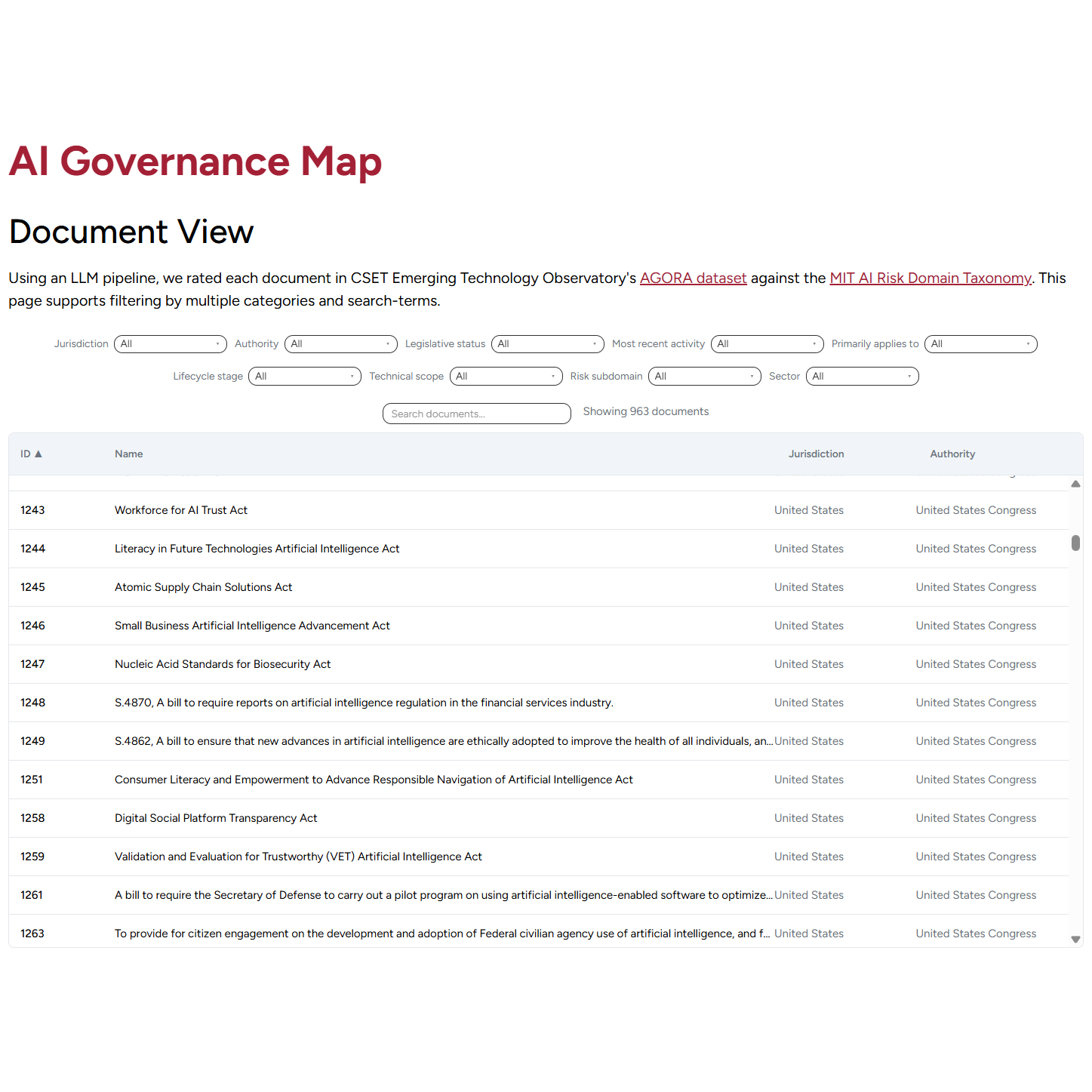

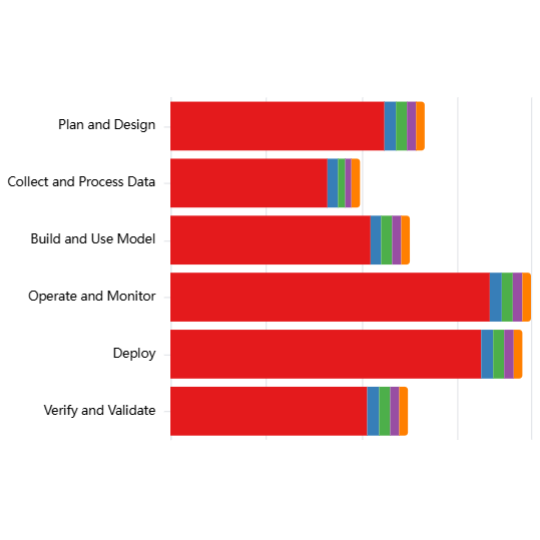

Using an LLM pipeline, we assessed whether each document in CSET Emerging Technology Observatory's AGORA dataset included mention of any one of 10 technical terms that define the scope of AI systems addressed in governance documents. See the pilot blog post for discussion of the methodology.

Insights from analysis of Technical Scope terms mentioned:

- Coverage patterns: “AI Systems” and “AI Models” receive broad coverage, “Task Specific AI”, “Predictive AI”, and “Generative AI” receive substantial attention, however less than “AI Systems” and “AI Models”. Because AI Systems and AI Models encompass a wide range of technologies and use cases, this pattern suggests that current governance documents tend to regulate AI in general terms