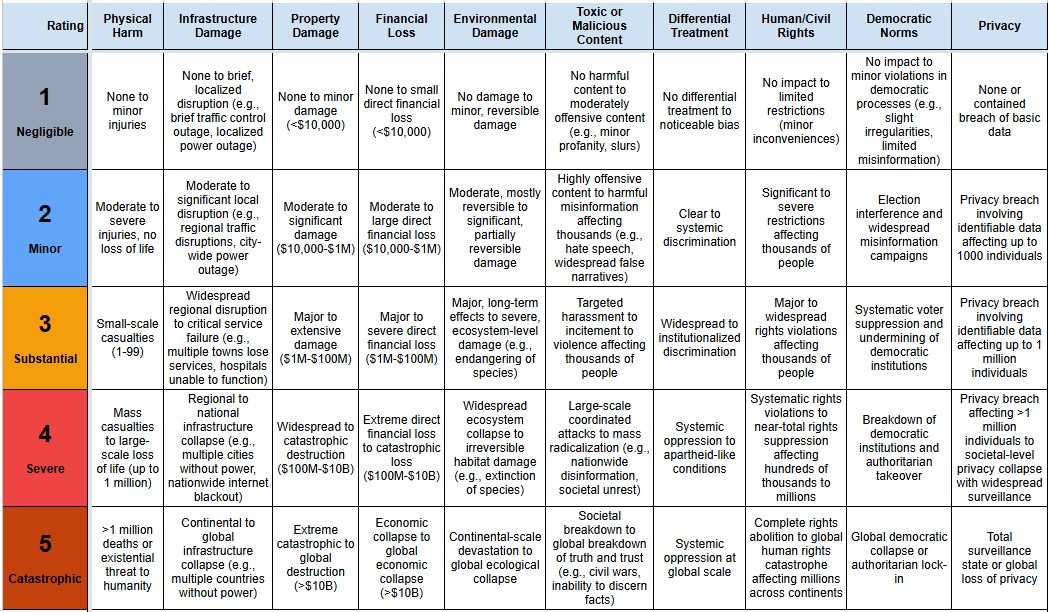

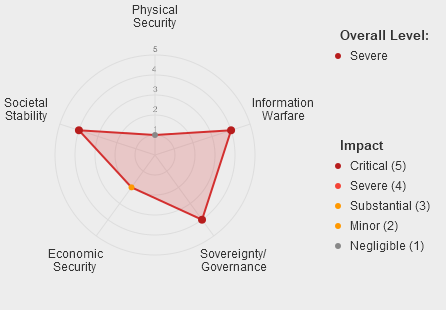

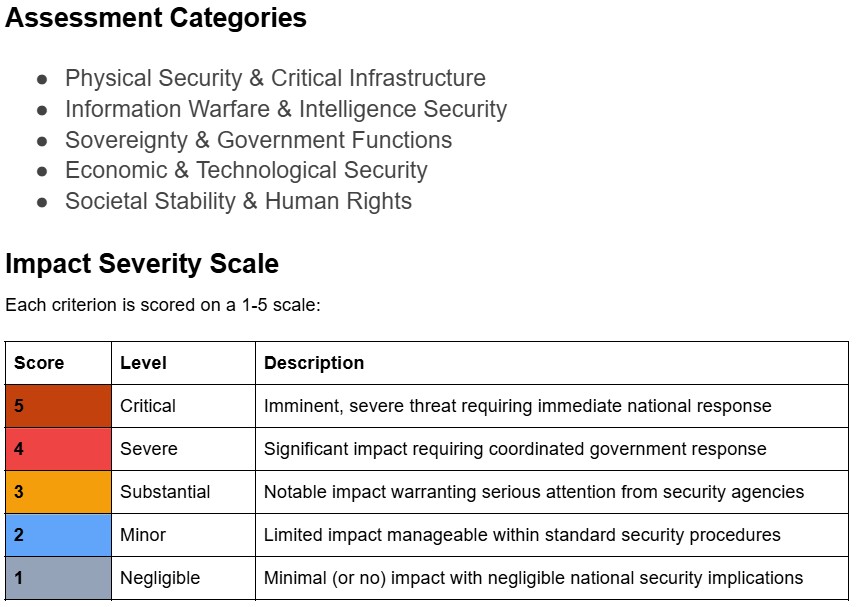

Using an LLM pipeline, we classified all the incidents in the AI Incident Database against the MIT Causal Taxonomy, the MIT Domain Taxonomy the EU AI Act Risk Levels and a harm severity scale covering 10 categories of harm.

Insights:

- There has been a clear rise year on year in the proportion of incidents in the following subdomains since 2022: 4.3 Fraud, scams and targeted manipulation, 3.1 False or misleading information

- The proportion of reported incidents attributed to lack of capability or robustness has been decreasing each year since 2021

- Numbers of reported incidents attributed to systems whose purpose is video, voice or image generation have increased dramatically since 2022, now totalling around half reported incidents.

- The proportion of incidents classified as level 1 (Unacceptable) under the EU AI Act has increased each year since 2022, reaching 4% in 2025]

- The proportion of intentionally caused incidents has increased each year from 2021-2025, as has the proportion attributed to Human rather than AI entities.

- The overwhelming majority of incidents assessed were classified as post-deployment with no more than a few percent being pre-deployment in any year.

Interactive Chart

Explore the chart by adding filters (e.g. Domain, Entity, Intent, Timing, AI Purpose etc) and stack by different categories to see the distribution of incidents in each year.

Select incidents where any category of harm exceeded a severity threshold, or select harm severity thresholds for individual categories.

The links below the chart quickly apply preset example filter configurations.